AI governance in higher education is the structured system of policies, oversight committees, ethical standards, and risk controls universities use to manage AI across teaching, research, and administration, going beyond IT policy to address accountability, data privacy, and academic integrity.

Artificial intelligence is advancing in higher education faster than institutional policy can keep pace. Faculty are experimenting with generative AI tools, students are submitting AI-assisted work, and administrative departments are automating everything from admissions to advising.

Yet most universities are facing a governance gap.

According to EDUCAUSE’s 2024 AI Landscape Study, 80% of faculty and staff use AI tools, yet fewer than one in four are aware of a formal institutional policy. The result is rapidly growing shadow AI unsanctioned tools operating entirely outside institutional oversight.

For university leaders, the question is no longer whether AI will shape higher education; it is whether institutions will govern it responsibly before a data breach, regulatory violation, or integrity crisis forces their hand.

That is precisely what AI governance in higher education is designed to prevent.

Why AI Governance Is Becoming Critical for Universities

Universities are inherently open environments built for experimentation and academic freedom.

These values drive innovation, but they also create governance blind spots when powerful technologies enter the ecosystem without structure. a tension explored in depth in our look at how AI is already reshaping university teaching, research, and governance.

Three forces are making AI governance in higher education urgent in 2026.

1. Regulatory Pressure is Accelerating

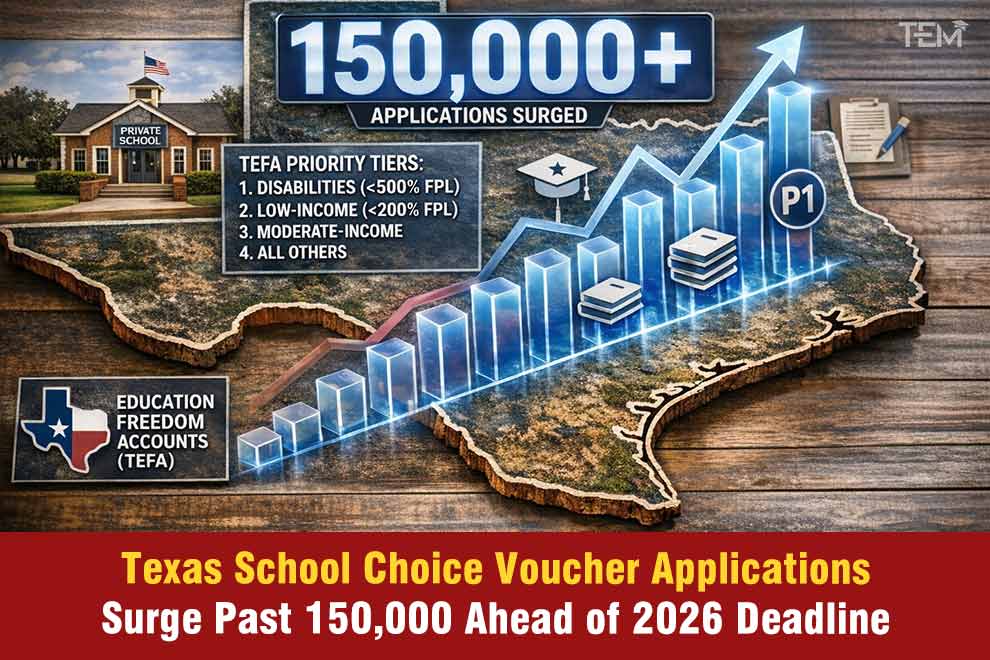

The EU AI Act, which came into force in August 2024, classifies several AI applications common in universities, including AI-assisted admissions systems and student performance analytics, as “high-risk.” European universities must now demonstrate compliance or face penalties.

In the United States, the Department of Education’s 2023 guidance on AI explicitly calls on institutions to develop ethical frameworks for AI use in student services and assessment.

FERPA, meanwhile, imposes strict constraints on how student data can be processed by third-party AI systems.

2. Adoption has Outpaced Policy

Generative AI platforms are now embedded across research workflows, automated grading, tutoring systems, and administrative automation. Without governance, practices become inconsistent and unauditable across departments, creating compliance risks that institutions may not discover until audited.

3. Public Trust is at Stake

UNESCO’s Recommendation on the Ethics of AI warns that institutions failing to govern AI transparently risk eroding the public legitimacy that universities depend on. That trust, once lost in a high-profile AI incident, is difficult to recover.

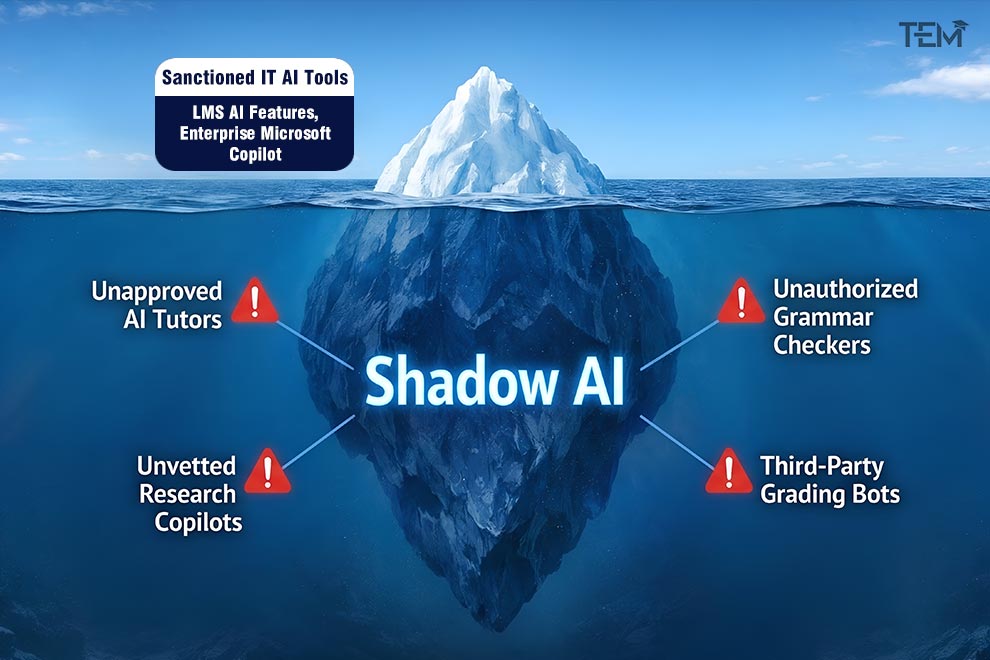

The “Shadow AI” Problem on Campus

Shadow AI–AI tools adopted by faculty, staff, or students without institutional approval represent one of the most underestimated risk vectors in higher education today.

EDUCAUSE research consistently finds that universities have far more AI tools operating across their campuses than IT departments are aware of.

These include AI writing assistants, research automation tools, coding copilots, data analysis platforms, and AI tutoring systems, many of which operate entirely outside the university’s data governance perimeter.

The risks are concrete:

- Data Leakage: When a professor uploads an unpublished research dataset to a commercial AI platform, that data may be stored, logged, or used for model training. The university loses control of its intellectual property with no audit trail.

- FERPA Violations: If student performance records are processed by an unapproved AI system, the institution may violate federal privacy law even if the faculty member had no intent to breach compliance.

- Cybersecurity Exposure: AI tools connected informally to campus systems bypass the security reviews that approved enterprise software undergoes.

The governance lesson is clear: awareness campaigns alone are insufficient. Institutions need active monitoring, not just policy documents.

Core Pillars of an AI Governance Framework

Effective AI governance in higher education is not an IT function; it is an institutional one.

Guidance from UNESCO, the OECD’s AI Principles, and the UK’s Higher Education Policy Institute (HEPI) consistently identifies four pillars that distinguish mature governance frameworks from ad hoc policy responses.

1. Institutional AI Steering Committees

Governance must begin at the leadership level. Universities, including the University of Edinburgh, published one of the first comprehensive institutional AI governance frameworks in UK higher education in 2024.

Structured oversight around cross-functional committees composed of academic leadership, IT, legal, research ethics, and faculty representatives.

These committees are responsible for defining acceptable use policies, reviewing AI vendors, monitoring emerging risks, and advising senior leadership. Critically, they prevent AI decisions from defaulting entirely to IT departments, ensuring academic values remain central to governance.

2. Ethical AI Principles in Practice

Ethical governance goes beyond publishing principles; it operationalises them:

A. Transparency

Students and faculty must know when AI is involved in academic or administrative decisions. AI-assisted grading, automated feedback, and algorithmic admissions screening should be disclosed.

The EU AI Act makes transparency mandatory for high-risk AI applications; institutions operating in Europe have no choice.

B. Fairness and Bias Auditing

AI systems trained on historical datasets can reproduce structural inequalities. Arizona State University’s AI Task Force, established in 2023, requires bias testing before any AI tool is deployed in student-facing applications, a model that other institutions are beginning to adopt.

C. Accountability

Final decisions involving students or staff must remain under human authority. AI informs; humans decide. This principle is embedded in most leading governance frameworks and is a baseline requirement under EU AI Act provisions for high-risk systems.

3. Human-in-the-Loop (HITL) Oversight

Rather than permitting AI systems to operate autonomously, HITL governance requires human review at every critical decision point.

An AI system might analyse student engagement patterns and flag students at risk of dropping out, but advisors retain full decision-making authority over any intervention.

MIT’s published AI Policy Principles identify HITL oversight as a foundational standard for AI systems that affect student outcomes, a position reflected in the broader governance frameworks many research universities are now formalising.

This approach allows institutions to gain AI-driven insights while preserving academic accountability and legal defensibility.

4. Continuous Monitoring Over Static Policy

Traditional IT policies are approved once and periodically reviewed. AI governance cannot work this way. AI models are updated, training data changes, and new capabilities emerge on timelines that annual policy reviews cannot match.

The OECD’s Framework for the Classification of AI Systems recommends “continuous assurance” models, ongoing monitoring of AI behaviour rather than one-time approval.

Universities adopting this approach build audit mechanisms directly into their AI procurement contracts.

AI Governance and Academic Integrity

Generative AI has transformed academic integrity from a plagiarism problem into an authorship problem, and the two require fundamentally different responses. This is one of the most visible places where AI governance in higher education directly shapes the student experience.

Traditional plagiarism detection looked for copied text. AI-generated content is original by definition, rendering tools like Turnitin insufficient as standalone solutions.

The University of Oxford’s Academic Integrity Framework, revised in 2024, explicitly acknowledges this, pivoting from detection-first approaches toward assessment redesign and transparent disclosure policies.

Leading institutions are responding across three fronts:

1. Assessment Redesign

Oral examinations, project-based work, and in-class analytical tasks evaluate understanding that AI cannot simulate.

Stanford’s Hasso Plattner Institute of Design has piloted process documentation approaches in select courses, where students submit drafts, annotations, and reflective journals that demonstrate the thinking behind outputs, not just the outputs themselves.

2. Disclosure-Based Policies

Rather than attempting blanket bans, which EDUCAUSE notes are largely unenforceable, institutions like University College London now require students to declare when and how AI tools were used, treating transparency as an academic skill in itself.

3. Faculty Development

Separate EDUCAUSE faculty readiness findings indicate that fewer than 30% of faculty feel confident designing AI-resilient assessments.

Governance frameworks that invest in faculty training, not just student-facing policies, produce more consistent and defensible integrity standards.

The EU AI Act: What Universities Cannot Ignore

The EU AI Act introduces the most significant regulatory change for universities operating in or with European institutions since GDPR.

Key implications for higher education:

- High-risk classification: AI systems used for student assessment, admissions screening, and performance monitoring fall under the Act’s high-risk category.

These systems require conformity assessments, bias testing, human oversight mechanisms, and full auditability before deployment.

- Prohibited practices: AI systems that use subliminal techniques or exploit student vulnerabilities relevant in the context of adaptive learning platforms are prohibited outright.

- Extraterritorial reach: Universities outside the EU that process data from EU-based students or partner with EU institutions may still fall under the Act’s scope.

Institutions that have not begun EU AI Act compliance assessments are already behind. The Act’s high-risk provisions apply from August 2026 for most use cases.

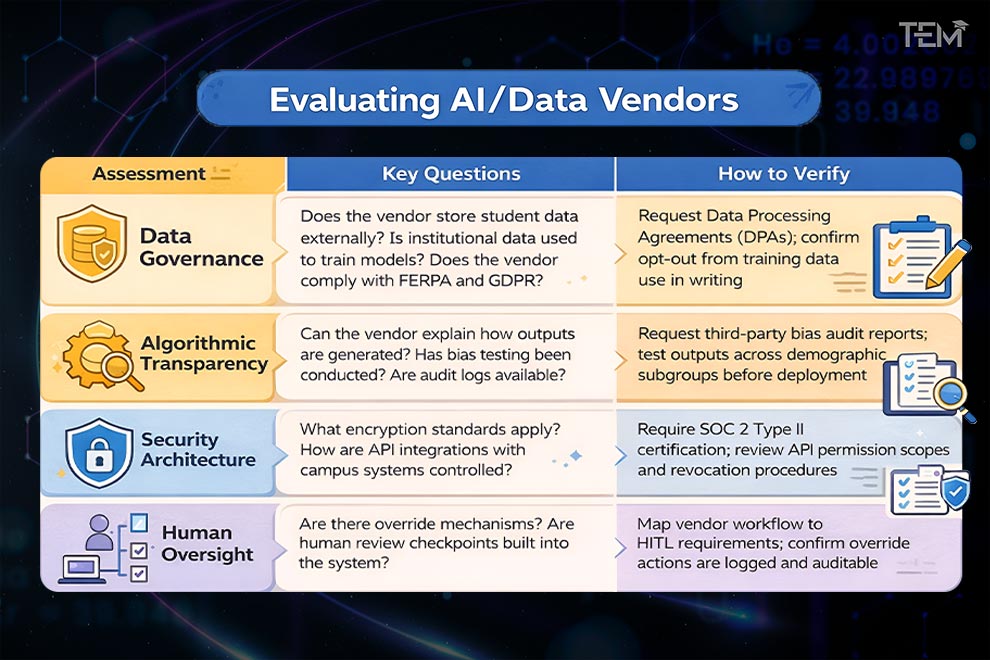

AI Vendor Risk: A Governance Checklist

Every AI tool adopted by a university carries institutional risk. A structured vendor assessment protects against shadow AI entering through procurement channels.

Governance committees should evaluate vendors across four dimensions:

Universities increasingly apply zero-trust security principles to AI vendor evaluation, treating every external system as a potential risk until verified, rather than trusted by default.

Building an Institutional AI Governance Roadmap

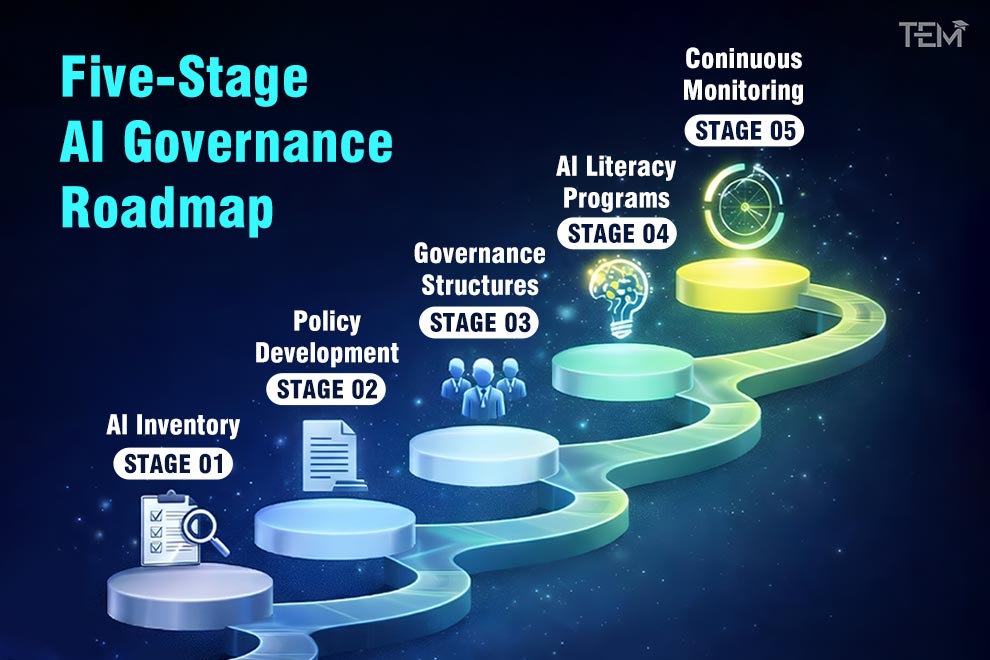

Policy without implementation is a document, not a governance framework. Institutions building AI governance in higher education from the ground up should move through five stages sequentially rather than simultaneously.

Stage 1: AI Inventory

Map every AI tool currently in use across teaching, research, administration, and student services. Most universities discover significantly more tools than anticipated.

This inventory process is typically when the full scale of unsanctioned AI adoption becomes visible for the first time.

Stage 2: Policy Development

Draft acceptable use policies, research ethics guidelines, procurement standards, and data governance rules. Policies should define what is permitted, what requires approval, and what is prohibited, with clarity on consequences.

Stage 3: Governance Structures

Establish the AI steering committee, data governance board, and ethics review panel. Define decision rights clearly: who approves new AI tools, who monitors deployed systems, and who escalates concerns.

Stage 4: AI Literacy Programs

Governance fails if faculty and staff lack the knowledge to apply it. Training should cover responsible AI use in teaching, algorithmic bias recognition, and the practical implications of institutional policy, not just the existence of that policy.

Stage 5: Continuous Monitoring

Build review cycles into governance from day one. AI systems change. Regulations change. Institutional risk profiles change.

Annual audits of deployed AI systems, quarterly vendor reviews, and real-time monitoring of high-risk applications should be standard by the time a university reaches governance maturity.

Preparing for Agentic AI: The Next Governance Frontier

Generative AI is already a governance challenge. Agentic AI systems capable of taking autonomous actions across research databases, scheduling systems, administrative workflows, and communication platforms will be substantially harder to govern.

Multi-agent architectures, where multiple AI systems collaborate and delegate tasks to one another, are moving from research labs to enterprise software. Several major edtech vendors are already piloting agentic tools for student advising and course personalisation.

For universities, this raises governance questions that current frameworks are not designed to answer. Early guidance from the World Economic Forum’s AI Governance Alliance and emerging institutional practice points toward three priority areas:

1. Accountability chains

When an autonomous AI agent makes a decision that harms a student, current frameworks built around individual human decision-makers do not map cleanly onto multi-step automated pipelines.

Leading institutions are beginning to designate a named human “AI accountability owner” for each deployed agentic system, ensuring that every automated workflow traces back to an identifiable individual with review authority.

2. Access governance.

Agentic systems require dynamic, scoped permissions rather than static access grants.

The emerging best practice aligned with zero-trust principles already applied to vendor evaluation is to grant agentic AI the minimum permissions required for a specific task, with automatic expiration and human-triggered re-authorisation for sensitive data environments, including student records and research repositories.

3. Pipeline auditability

Multi-agent systems can obscure the origin of individual decisions across layers of automated delegation.

Universities building agentic governance policies are prioritising end-to-end logging requirements, mandating that every agent action, handoff, and decision point is recorded in an immutable audit trail that human reviewers can interrogate after the fact.

The World Economic Forum’s AI Governance Alliance recommends that institutions begin developing agentic AI governance policies now, before deployment pressure forces universities into reactive policymaking.

The Future of Responsible AI in Higher Education

AI will reshape every function of the modern university, learning, research, operations, and student support, but the shape it takes will not be determined by the technology alone.

It will be determined by the choices institutions make now about accountability, oversight, and whose interests governance is designed to protect.

Strong AI governance in higher education is not a constraint on innovation. It is the condition that makes innovation defensible: to students whose data is at stake, to regulators who are watching, and to the public that grants universities their social license to operate.

The universities that will define the next era of higher education are not necessarily the earliest adopters. They are the ones building the institutional infrastructure to ensure that every AI system they deploy can be explained, audited, and, if necessary, stopped.

If you found this insight valuable, share it with academic leaders and colleagues who are shaping the future of responsible AI in higher education.

FAQs

- What is the difference between AI policy and AI governance in universities?

AI policy refers to the written rules defining acceptable AI use across teaching, research, and administration. AI governance is the broader system of committees, monitoring processes, vendor oversight, and accountability structures that ensure those policies are actually implemented and enforced. Policy without governance is largely unenforceable.

- What does the EU AI Act require of universities?

Universities using AI in student assessment, admissions, or performance monitoring must comply with the Act’s high-risk AI provisions from August 2026. This includes bias testing, human oversight mechanisms, conformity assessments, and full audit trails. Institutions outside the EU may still fall under the Act’s scope if they process data from EU students.

- How are universities addressing AI and academic integrity?

Leading institutions are moving away from detection-first approaches, which AI-generated content largely defeats, toward assessment redesign, transparent disclosure policies, and faculty development programs. The goal is to build AI literacy and responsible use habits rather than attempt unenforceable bans.

- What is shadow AI and why does it matter?

Shadow AI refers to AI tools used by faculty, students, or staff without institutional approval or oversight. It matters because these tools may expose student records, unpublished research, or intellectual property to external platforms without the university’s knowledge, creating data privacy, regulatory, and cybersecurity risks.