AI agents are moving quickly from experimentation to production. Universities are testing them in admissions, advising, grading, IT help desks, and student engagement workflows. Yet many of these deployments stall. Some produce confident but incorrect answers. Others loop endlessly or become too expensive to sustain.

The issue is rarely the model itself. It is how the system was designed. This is why building agentic AI applications with a problem-first approach is becoming essential for institutions that want reliability, accountability, and measurable outcomes.

Too many teams begin with tools instead of clearly defining the operational problem. They prototype an agent before setting boundaries, guardrails, or measurable outcomes. The result is complexity without control.

Instead of asking, “What can this AI do?” institutions ask, “What specific bottleneck are we solving and how will we know it worked?”

In the sections ahead, we move from principle to execution, outlining the architecture, guardrails, evaluation frameworks, and real higher education use cases that turn AI agents into dependable systems rather than experimental pilots.

Why Most Agentic AI Projects Fail

Before discussing frameworks, we need to understand failure.

Across industries, early agentic AI pilots often struggle for three predictable reasons.

1. The Tool-First Trap

Many teams start with a powerful large language model and ask it to “act autonomously.” The agent is given broad instructions, a few APIs, and minimal constraints.

Without clearly defined boundaries:

- The agent makes assumptions.

- It overreaches its permissions.

- It improvises when uncertain.

- It generates plausible but inaccurate responses.

The system may appear impressive in demos. In production, it becomes unreliable.

2. Undefined Scope

A university might say: “Let’s build an AI admissions agent.” But what does that mean?

- Verify transcripts?

- Answer applicant queries?

- Evaluate eligibility?

- Detecting missing documents?

Without narrowing the scope to a single operational friction point, complexity multiplies. Agents become vague assistants instead of structured systems. This lack of precision often leads to security vulnerabilities, such as the AI-driven ‘Ghost Student’ enrollment fraud currently targeting online programs.

3. Lack of Deterministic Control

Agentic AI is not just generation. It is orchestration.

If reasoning and execution are not separated:

- The model both decides and acts.

- There is no strict workflow.

- Debugging becomes nearly impossible.

In distributed systems engineering, an uncontrolled state is dangerous. The same applies to agentic AI.

4. No Observability

If you cannot trace:

- What the agent planned

- Which tool was invoked

- Why did it choose a specific action?

- Where it failed

You cannot improve it.

Universities especially require audit trails for governance, compliance, and accountability.

Without observability, agents become black boxes.

What Agentic AI Really Means

To build properly, we need clarity.

Agentic AI is not a chatbot with tools. It is the next evolution of how AI is transforming learning and institutional operations. A goal-directed system that:

- Understands intent

- Breaks a goal into steps

- Selects tools

- Executes actions

- Evaluates results

- Adjusts if needed

In simple terms, it behaves less like a conversational assistant and more like a workflow engine with reasoning capabilities.

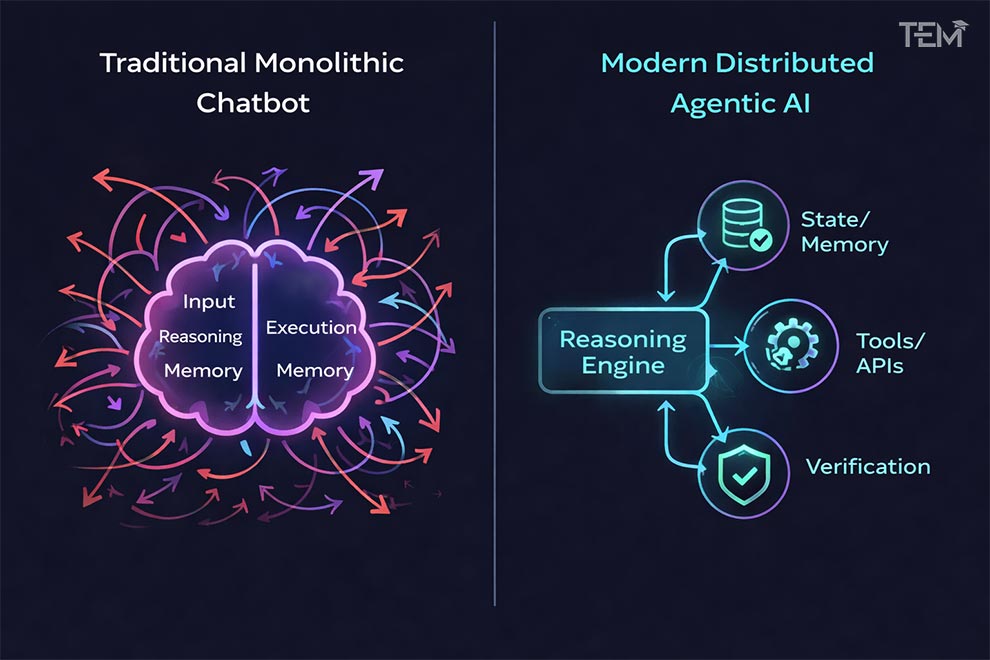

From Monolithic Chatbots to Distributed Systems

Modern agentic architectures resemble microservices:

- One component handles reasoning.

- Another manages the state.

- Another executes tools.

- Another verifies outputs.

This separation increases reliability.

Instead of letting one model “do everything,” we treat the language model as a reasoning engine within a structured system.

That shift from monolith to distributed orchestration is what makes production-grade agents possible.

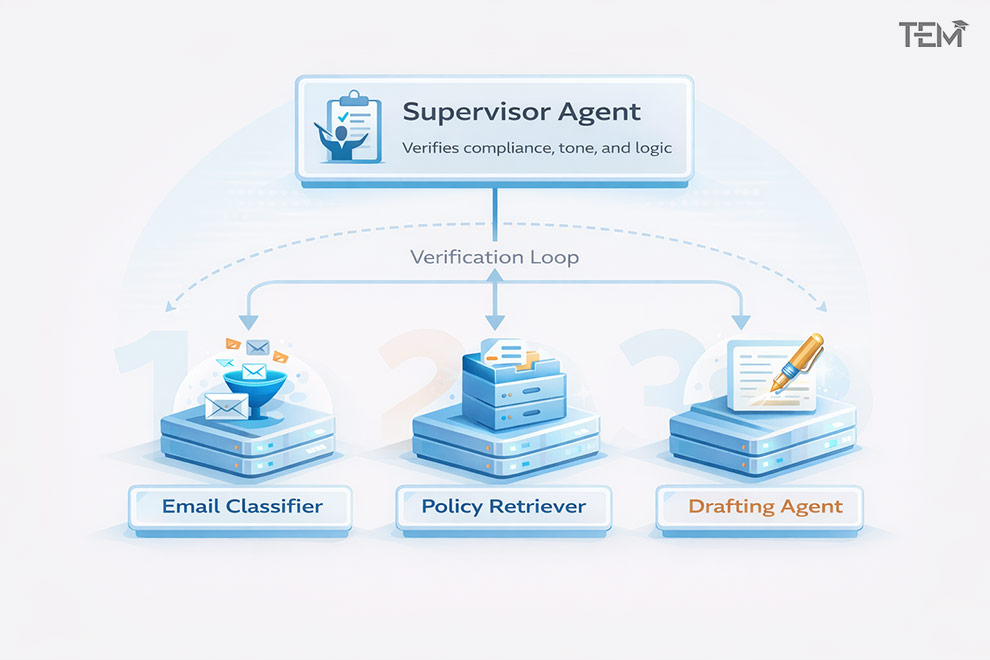

Multi-Agent Workflows Explained Simply

In complex environments like higher education:

- One agent may classify IT tickets.

- Another may retrieve knowledge base articles.

- A supervisor agent may decide to escalate.

Each has a defined role.

This reduces chaos and increases control.

The Blueprint for Building Agentic AI Applications with a Problem-First Approach

Now we move from theory to execution.

A problem-first approach follows disciplined steps before writing code.

Step 1: Define the Operational Bottleneck

Not a broad ambition.

A specific friction point.

Examples in higher education:

- International transcript pre-validation is causing 6-week delays.

- Repetitive grading in large introductory courses.

- IT tickets are overwhelming campus support teams.

- Advisors are overloaded during enrollment cycles.

The question is: Where is measurable inefficiency?

Step 2: Set Boundaries and Constraints

Before building:

- What data can the agent access?

- What actions is it allowed to take?

- When must a human intervene?

- What is outside its authority?

In regulated environments, this step protects institutions.

Constraints are not limitations. They are design strength.

Step 3: Define Success Metrics Before Code

If you cannot measure improvement, you cannot justify deployment.

Metrics might include:

- Reduction in processing time

- Accuracy rate above a defined threshold

- Cost per transaction ceiling

- Escalation rate

This transforms AI from experimentation into operations.

Step 4: Build a Minimum Viable Agent (MVA)

Not a universal assistant.

A single controlled workflow.

- One defined task

- One structured input

- One verified output path

- One escalation rule

Start small. Validate. Expand.

This is how distributed systems mature, and agentic systems should follow the same logic.

Architecture That Makes Agents Reliable

If the problem-first blueprint defines what you are solving, architecture determines whether the solution survives production.

Agentic AI fails when reasoning and execution blur together. It succeeds when responsibilities are clearly separated and controlled.

Let’s break down the architectural principles that make this possible.

1. Microservices, Not Monoliths

Traditional chatbots are monolithic.

One model receives input and produces output.

Agentic AI should not work that way.

In modern distributed system design:

- Reasoning is separated from execution.

- State is stored externally.

- Tools are invoked through controlled interfaces.

- Verification layers validate outputs.

This structure mirrors microservices architecture. Each component has a defined role. If one fails, the entire system does not collapse. Many architects are now utilizing Small Language Models (SLMs) for these roles to ensure the system remains cost-effective and fast.

For universities, this matters. Admissions, advising, and compliance systems cannot depend on a single unpredictable decision engine.

2. Design Patterns That Work

Several architectural patterns are now widely used in production agentic systems. Understanding them helps teams choose the right level of autonomy.

A. ReAct (Reason + Act)

ReAct allows an agent to:

- Think through a step.

- Take an action (such as calling an API).

- Observe the result.

- Continue reasoning.

This loop continues until the task is complete.

It works well for dynamic environments like IT ticket triage, where information must be retrieved before deciding.

However, without guardrails, the loop can continue indefinitely. That is why constraints matter.

B. Plan-and-Execute

In this pattern:

- The agent first generates a structured plan.

- Then it executes each step deterministically.

This reduces improvisation. The reasoning phase is separated from the action phase.

For higher education workflows such as transcript validation, this structure improves traceability and debugging.

3. Hierarchical Supervisor Model

Here, multiple specialized agents operate under a supervisor agent. For example:

- Agent A classifies incoming emails.

- Agent B retrieves institutional policies.

- Agent C drafts a response.

- A supervisor verifies compliance and tone.

This mirrors how human teams operate. It also reduces the risk of a single agent overreaching its authority.

4. Deterministic Rails: The Hidden Backbone

The most reliable agentic systems do not give language models full freedom. They use deterministic rails.

This means:

- Strict API schemas define what tools accept and return.

- Hard-coded state machines control workflow transitions.

- Predefined exit conditions stop infinite loops.

- Confidence thresholds trigger human escalation.

In simple terms, the model reasons, but the system decides what is allowed. This distinction is critical in regulated environments like education.

5. State Management and Persistent Memory

Agentic systems operate across multiple steps and sessions. They require structured memory.

There are three common layers of memory:

- Short-term memory: Task-specific context.

- Session memory: Interaction history within a workflow.

- Persistent memory: Stored data that survives across sessions.

Without structured state management:

- Agents forget context.

- They repeat actions.

- They lose track of progress.

- Debugging becomes impossible.

Modern orchestration tools now allow teams to:

- Visualize execution paths.

- Rewind workflow states.

- Inspect reasoning traces.

For institutions handling thousands of workflows, this visibility is essential.

6. Separation of Reasoning and Execution

This is the core architectural principle.

The language model should not directly execute actions.

Instead:

- The model proposes an action.

- The system validates it.

- A tool executes it.

- The result is returned for evaluation.

That separation creates control, security, and accountability.

Observability, Evaluation, and Guardrails

Architecture prevents chaos. Observability prevents blind spots.

Without measurement, agentic systems degrade over time.

1. Agent Tracing and Logging

Every production-grade agent should log:

- The reasoning step taken

- The tool selected

- The input and output schema

- The confidence score

- The final decision

This creates an audit trail.

In higher education, auditability supports compliance and institutional trust.

2. Evaluation Frameworks

Evaluation must go beyond “it sounds good.”

Institutions should test:

- Accuracy against benchmark datasets

- Edge-case failure behavior

- Cost per execution

- Response time consistency

- Escalation frequency

Evaluation should occur:

- Before deployment

- During pilot

- After scaling

Agentic systems are dynamic. Monitoring cannot be a one-time event.

3. Reducing Hallucination Risk

Hallucinations often occur when:

- The agent lacks sufficient context.

- It guesses missing data.

- It is allowed to answer beyond its authority.

Mitigation strategies include:

- Strict answer formats

- External verification layers

- Human review triggers

In grading or advising contexts, even small inaccuracies can create reputational risk.

4. Human-in-the-Loop Circuit Breakers

Autonomy does not mean the absence of humans.

Instead, autonomy should include escalation rules:

- If confidence < threshold → escalate.

- If action affects the student record → require approval.

- If cost exceeds predefined ceiling → halt workflow.

These circuit breakers protect both students and institutions.

Agentic AI in Higher Education: Real Applications

Now we apply the framework.

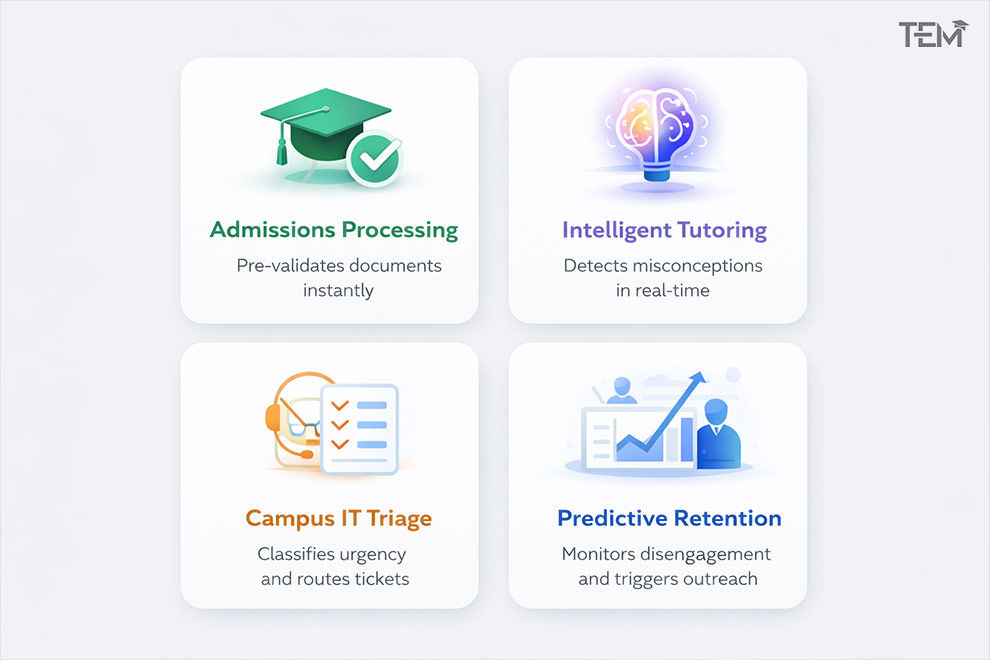

1. Admissions Processing

Problem: Transcript evaluation delays.

Agentic Solution:

- Pre-validates documents against predefined criteria.

- Flags the missing information.

- Escalates edge cases to admissions officers.

Impact:

- Faster processing.

- Reduced repetitive workload.

- Humans focus on complex evaluations.

2. Intelligent Tutoring Systems

Problem: Students are struggling silently.

Agentic Solution:

- Detects misconceptions through response analysis.

- Adapts explanations in real time.

- Suggests targeted exercises.

These reflective loops increase engagement and retention.

3. Campus IT Triage

Problem: Overloaded help desks.

Agentic Solution:

- Classifies ticket urgency.

- Routes to the correct department.

- Suggest known solutions before escalation.

This improves resolution speed without sacrificing control.

4. Predictive Retention Monitoring

Problem: Late identification of at-risk students.

Agentic Solution:

- Monitors attendance and LMS participation.

- Detects sudden disengagement patterns.

- Triggers advisor outreach workflows.

When implemented with privacy safeguards, this becomes a proactive support system.

Governance and Ethical Design

In education, architecture alone is not enough.

Aligning with institutional standards like the EDUCAUSE AI Ethical Guidelines, Institutions must address:

- Data minimization

- Access control

- Consent frameworks

- Auditability

- Bias detection

Compliance is not an afterthought. It is part of the design.

A problem-first approach inherently supports governance because it:

- Defines scope.

- Limits data exposure.

- Establishes accountability metrics.

The Institutions That Get This Right Will Lead the Future

Building agentic AI applications with a problem-first approach is not about adopting the newest model. It is about solving operational friction with clarity and discipline.

The institutions that begin with clearly defined problems and build systems with boundaries, guardrails, and measurable outcomes will move from experimentation to transformation.

Those who chase hype will continue piloting systems that never scale.

The opportunity is not theoretical. It is operational.

If this framework helped clarify your AI strategy, share it with your CIO, innovation team, or academic leadership. The future of education will belong to institutions that design AI with intention.

FAQs

1. What is a problem-first approach in agentic AI?

A problem-first approach in agentic AI means defining the operational bottleneck, constraints, and success metrics before building the system. Instead of starting with a model or tool, institutions begin by identifying a specific inefficiency, such as admissions delays or IT ticket overload, and then design an agent to solve that single, measurable problem. This reduces hallucinations, scope creep, and unnecessary complexity.

2. How do you prevent hallucinations in agentic AI systems?

Hallucinations are reduced by combining deterministic workflows with clear guardrails. This includes separating reasoning from execution, using strict API schemas, limiting data access, validating outputs against trusted sources, and triggering human review when confidence falls below a defined threshold. Observability and logging also help identify patterns that cause unreliable behavior.

3. What is a Minimum Viable Agent (MVA)?

A Minimum Viable Agent (MVA) is a small, controlled agent built to solve one clearly defined task. It operates within strict boundaries, uses a single trusted data source, and includes escalation rules. The goal is to validate performance, cost, and reliability before expanding into more complex multi-agent systems.

4. How can universities implement agentic AI safely?

Universities can implement agentic AI safely by starting with narrow use cases, defining clear data access policies, ensuring compliance with privacy regulations, building human-in-the-loop escalation mechanisms, and continuously monitoring performance. Governance, audit trails, and measurable outcomes should be part of the architecture from day one.