ChatGPT in higher education has moved from experiment to everyday reality. In lecture halls, libraries, and student dorm rooms, generative AI is already influencing how assignments are written, research is conducted, and ideas are developed.

What began as curiosity quickly became a governance challenge. The faculty questioned academic integrity. Students explored new efficiencies. University leaders faced a critical decision: restrict, regulate, or responsibly integrate the technology.

The debate is no longer about whether ChatGPT belongs in universities. It is about how institutions can guide their use without compromising academic values.

Is it a shortcut that weakens learning? Or a tool that can strengthen accessibility, engagement, and critical thinking when used with clarity?

Understanding ChatGPT in higher education requires moving beyond fear and toward evidence-based leadership.

The Real Impact of ChatGPT in Higher Education

ChatGPT is a generative artificial intelligence system built on large language models. It produces human-like text by analyzing patterns across vast amounts of training data.

Unlike earlier educational technologies, it does not simply deliver pre-programmed content. It generates responses in real time.

This difference matters.

Previous digital tools helped students access information. ChatGPT helps them produce it. That shift places generative AI in higher education directly at the center of teaching, writing, and assessment.

Why adoption accelerated so quickly:

- Instantly accessible: no learning curve, no setup

- Conversational and intuitive: feels natural to use

- Directly supports academic tasks: drafting, outlining, summarizing, explaining

According to a 2023 BestColleges survey, 56% of college students reported using AI tools like ChatGPT on assignments, and the majority described it as a support tool, not a replacement for their own thinking.

The EDUCAUSE 2024 Horizon Report identified generative AI as the single most significant technology trend reshaping higher education globally.

In most institutions, AI adoption did not begin with an official strategy. It began with students experimenting independently. Now universities must catch up, not to suppress innovation, but to guide it.

How University Students Are Using ChatGPT Today

Most students use ChatGPT as a support mechanism, not a wholesale substitute for learning. Research consistently bears this out, and a growing range of AI tools students actually use reflects how embedded this technology has become in campus life.

Common uses include:

- Clarifying Complex Concepts: Asking ChatGPT to explain theories in simpler language or generate alternative examples. Especially valuable for international students working in a second language.

- Structuring and Improving Writing: Refining structure, improving grammar, or brainstorming outlines. It functions much like an advanced writing assistant.

- Summarizing Academic Material: Condensing long readings for revision. However, overreliance risks reducing deep engagement with original sources.

- Generating Study Prompts: Creating practice questions to test understanding before exams.

The concern arises when assistance becomes substitution. If students rely on AI to produce final submissions without reflection, learning outcomes suffer. The line between support and replacement lies at the heart of the academic integrity debate.

ChatGPT and Academic Integrity in Universities

If ChatGPT can draft essays, solve problems, and generate structured arguments, how can institutions ensure that submitted work reflects genuine student effort?

Academic integrity concerns fall into three core risks:

- Substitution of Learning: When students use ChatGPT to generate full assignments without engaging in the thinking process, learning is bypassed rather than supported.

- Blurred Authorship: Generative AI complicates the assumption that submitted work represents a student’s own reasoning, raising questions around transparency and intellectual ownership.

- Equity and Fairness: Students who use AI strategically, while others avoid it due to ethical hesitation or lack of awareness, may gain uneven advantages.

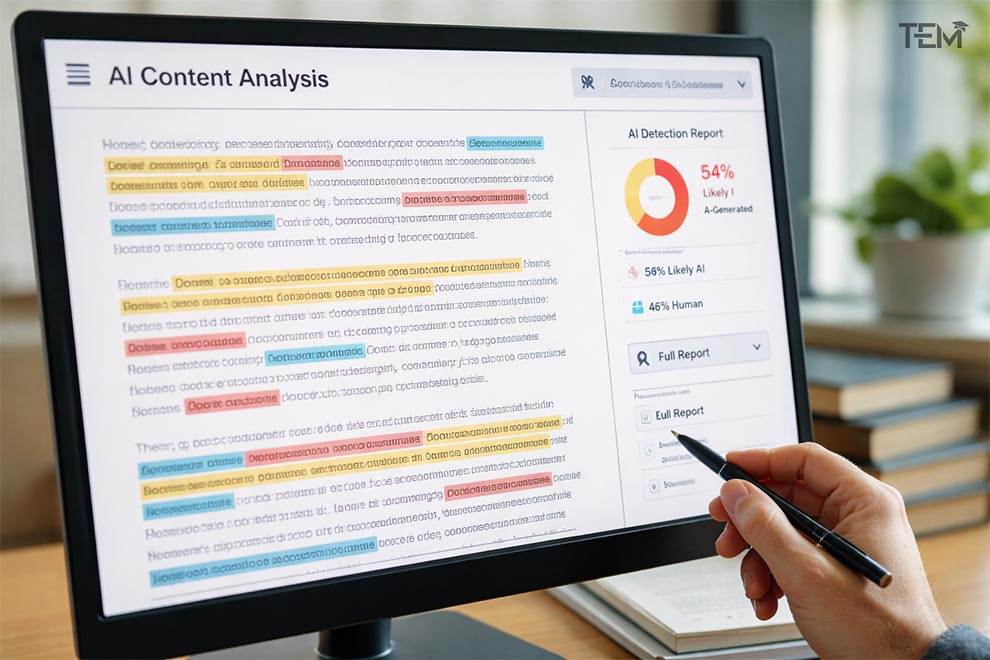

Detection tools have emerged in response. Turnitin integrated AI detection in April 2023, and of over 200 million papers reviewed, 22 million, roughly 11%, contained at least 20% AI-generated writing.

GPTZero, built by Princeton undergraduate student Edward Tian in January 2023, is widely used by educators for the same purpose.

However, both tools carry false positive rates that remain a concern, and neither is considered fully reliable on its own.

The shift is from restriction to clarity. UNESCO’s Guidance for Generative AI in Education and Research (2023) explicitly recommends defining acceptable AI use rather than pursuing outright bans, noting that prohibition is neither practical nor pedagogically sound.

What strong academic integrity frameworks now include:

- Transparency requirements when AI is used

- Clear distinction between brainstorming and final submission

- Reflective components built into assignments

- Process-based evaluation, not just final output

How Universities Are Responding to Generative AI

Early reactions ranged from alarm to temporary bans. Within months, however, many leading institutions recognized that generative AI would remain part of academic life. The shift began from reactive policy to structured integration.

Today, universities fall into three broad approaches:

1. Restrictive but Transitional

Some institutions initially restricted AI use in coursework while developing formal guidelines. These policies often focused on preventing misuse in written assessments.

2. Guided Integration

Detailed guidance on responsible use, including syllabus language templates, faculty workshops, and student training.

Harvard published a framework requiring faculty to explicitly state AI policies in course syllabi. UCL introduced tiered guidance by discipline.

3. Strategic Adoption

AI literacy embedded into curricula. MIT’s Academic Integrity working group (2023) concluded the most productive path is teaching students to evaluate and question AI outputs, not avoid them.

| Institution | Approach | Key Policy Element |

| Harvard University | Guided Integration | Faculty-level AI disclosure in syllabi |

| MIT | Strategic Adoption | AI literacy embedded in coursework |

| UCL | Guided Integration | Tiered guidance by academic discipline |

| Stanford University | Strategic Adoption | AI ethics integrated into core curricula |

The most mature responses share one principle: human oversight remains central. AI governance in universities is increasingly built around transparency, accountability, and alignment with institutional values.

Rethinking Teaching and Assessment in the AI Era

ChatGPT has exposed a structural vulnerability in traditional assessment models. When evaluation focuses solely on final written output, generative AI can replicate expected formats with ease.

This does not mean the assessment has failed. It means assessment must evolve.

New approaches universities are adopting:

- Process-Based Evaluation: Students document drafts, reflections, and decision-making steps. The emphasis shifts from product to thinking journey.

- Applied and Contextual Tasks: Assignments requiring local data, personal reflection, or real-time engagement reduce generic AI responses.

- Oral Defense and Dialogue: Short viva-style discussions help faculty assess genuine understanding directly.

- AI-Integrated Assignments: Students use ChatGPT deliberately, then critically analyze its strengths, limitations, and biases. AI becomes the subject of inquiry, not just a tool.

AI assessment redesign is not about making tasks harder. It is about making learning more authentic.

Opportunities ChatGPT Creates for Universities

When integrated responsibly, ChatGPT creates real opportunity, not just risk. The benefits of AI in education extend well beyond convenience.

1. Expanding Academic Support

ChatGPT functions as an always-available academic assistant. It explains concepts, generates practice questions, and gives structured feedback on drafts. For students hesitant to ask questions in class, this increases confidence and engagement.

2. Enhancing Accessibility

Students with learning differences benefit from step-by-step explanations, alternative formats, and adaptive support. When used thoughtfully, AI lowers barriers rather than creates them.

3. Supporting Faculty Productivity

Faculty can draft rubrics, generate discussion prompts, and organize research notes faster. This reduces administrative load and frees time for deeper student mentoring.

4. Encouraging Critical AI Literacy

Universities are uniquely positioned to teach students how to question AI outputs, detect bias, verify sources, and apply ethical reasoning. This turns ChatGPT from a tool of convenience into an object of critical study.

Risks Universities Must Manage

Opportunity does not eliminate responsibility. Strategic leadership means acknowledging the risks clearly.

1. Misinformation and Hallucinations

ChatGPT produces confident but sometimes factually incorrect responses. A 2023 study published in Scientific Reports (Nature Portfolio) found that large language models routinely generate plausible-sounding citations that do not exist.

Without strong verification habits, students may treat flawed output as authoritative.

2. Bias in Outputs

LLMs reflect patterns in training data. This introduces subtle biases in cultural representation, research framing, and interpretation of complex issues that students must learn to identify.

3. Data Privacy Concerns

Unregulated AI use may expose sensitive student or research data to third-party systems. The EU AI Act (2024) classifies certain AI applications in education as high-risk, reinforcing the need for clear data governance policies.

4. Overdependence

Excessive reliance on AI risks weakening core academic skills, analysis, synthesis, and argumentation that universities exist to develop.

These risks are not arguments against AI. There are arguments for structured oversight and education.

What University Leaders Must Do Now

ChatGPT in higher education is no longer a theoretical issue. It is operational.

University leaders must move beyond reactive responses and adopt a strategic approach built on four priorities:

1. Establish Clear, Principle-Based Policies

Define acceptable use, require transparency, and build in adaptability. Static rulebooks will not keep pace with technological change. UNESCO’s 2023 guidance provides a strong starting framework.

2. Invest in AI Literacy

Go beyond technical skill. Students and faculty must understand AI’s limitations, biases, ethical implications, and responsible applications, not just how to prompt it.

3. Redesign Assessment Thoughtfully

Prioritize authentic learning, reflection, and applied reasoning. The goal is not to outsmart AI; it is to ensure assessment still measures what education is actually for.

4. Maintain Human Oversight

Final academic responsibility must always rest with humans: educators, administrators, and learners.

Leadership clarity will determine whether ChatGPT becomes a destabilizing force or a transformative one.

A Turning Point for Universities

ChatGPT in higher education is not a temporary disruption. It is a structural shift in how knowledge is accessed, produced, and evaluated.

Universities now face a defining choice. They can approach generative AI reactively, or they can shape its role with intention. The institutions that succeed will not be those that ignore change, nor those that adopt technology without guardrails. They will be the ones who align innovation with integrity.

The future of ChatGPT in higher education will depend on leadership clarity, policy maturity, and a commitment to human-centered learning.

If this perspective helped you think more clearly about the path ahead, share it with educators and academic leaders shaping the next chapter of higher education.

Thoughtful leadership today will define academic excellence tomorrow.

FAQs

- Is ChatGPT allowed in higher education?

It depends on the university and the course. Many institutions now allow ChatGPT under clear guidelines, especially for brainstorming and drafting support. However, using it to submit fully AI-generated work without disclosure is often considered a violation of academic integrity policies.

- Can ChatGPT replace professors in universities?

No. ChatGPT can assist with explanations and writing support, but it cannot replace human mentorship, critical feedback, ethical judgment, or subject expertise. Universities rely on faculty for guidance, research leadership, and student development roles that AI cannot replicate.

- How can students use ChatGPT responsibly in university?

Students can use ChatGPT responsibly by treating it as a study aid rather than a shortcut. This includes using it to clarify concepts, generate practice questions, or improve structure while ensuring the final work reflects their own thinking and understanding.

- Are universities developing official AI policies?

Yes. Many universities are creating or updating policies to address generative AI tools like ChatGPT. These policies typically define acceptable use, require transparency when AI is used, and provide guidance for faculty and students on ethical integration.