Over 88% of students now use generative AI tools for academic work. That number was just 53% in 2024. AI academic integrity has quickly become one of the most urgent challenges in education today.

AI academic integrity means using artificial intelligence tools honestly and responsibly to support learning, not to replace it. It requires students to be transparent about how they use AI and to ensure their final work reflects their own thinking.

Since ChatGPT launched in November 2022, the pace of AI adoption in schools has been staggering. Today, AI writing assistants, AI tutors, and ChatGPT alternatives like Claude and Copilot are part of daily student life. AI genuinely helps students learn when used the right way through personalized learning, immersive learning experiences, and better accessibility.

This article covers the real challenges, risks, and best practices around AI academic integrity for both students and educators.

What Is AI Academic Integrity?

AI academic integrity is the ethical and honest use of artificial intelligence tools to support learning without bypassing individual effort. It requires students to maintain transparency about their tool use and ensures that the final work reflects their own understanding and critical thinking.

The International Center for Academic Integrity defines integrity around five core values: honesty, trust, fairness, respect, and responsibility. These values do not change just because the technology does.

How AI Is Changing Education

AI in higher education has been growing steadily since the late 2010s. But ChatGPT‘s launch changed the pace entirely. Within months, educators at every level were dealing with AI-generated essays, automated homework answers, and students using AI as a crutch instead of a tool.

The Growth of AI Tools in Schools

- AI writing assistants like ChatGPT, Gemini, Claude, and Copilot are now widely used by students.

- AI tutors and personalized learning platforms offer targeted instruction based on individual progress.

- STEM education tools powered by AI now help students work through math, coding, and science problems step by step.

- Immersive learning environments use AI to simulate real-world scenarios in medical, engineering, and legal training.

According to a 2024 survey by Wiley, 45% of students used AI in their classes over the past year, compared to just 15% of instructors. This gap creates a serious awareness problem for institutions trying to monitor and manage AI use fairly.

Two sides of AI

AI offers real benefits. It can personalize learning for each student, provide instant feedback, automate repetitive tasks, and make education more accessible for students with disabilities or language barriers. But the same tools that can help a student understand a difficult concept can also write their entire assignment for them in under 30 seconds.

“AI is a tool to enhance learning and teaching, not a replacement for educators.“

That distinction matters more than ever in 2026.

Major Challenges of AI Academic Integrity in 2026

1. AI-Assisted Cheating

AI has made certain forms of academic dishonesty far easier. Students can generate essays, solve complex problems, or produce entire research summaries without engaging with the material at all. AI-related misconduct now represents 60 to 64% of all cheating cases in higher education globally, a nearly 400% increase over just three academic years.

A 2024 Frontiers in Education study found that 39% of students use large language models to answer assessments, and 7% admit to using AI to write entire papers.

2. AI Plagiarism and Detection Challenges

Detecting AI-generated content is still an imperfect science. Tools like Turnitin claim up to 98% accuracy for AI detection, and GPTZero reports a 1 to 2% false positive rate. But students are finding ways around detection by lightly editing AI outputs, mixing AI and human writing, or using paraphrasing tools to obscure AI origins.

The UK currently leads in flagged content, with 33% of student papers identified as potentially AI-generated, even though only about 10% of academic content worldwide is estimated to be fully AI-written. Discipline rates for AI-related plagiarism rose from 48% in 2022-23 to 64% in 2023-24.

3. Over-Reliance on AI

Perhaps the biggest long-term risk is not cheating but dependency. When students use AI to skip the hard work of thinking through a problem, they miss the development of critical thinking, problem-solving, and communication skills. These are skills that no AI can hand them on graduation day.

As Marcus D. Taylor, MBA, an educator and instructional design expert, puts it:

“Dependence on AI may hinder critical thinking and problem-solving skills, leading to knowledge gaps.”

4. AI Policies

Many schools are still figuring out what the rules actually are. According to institutional surveys, only 58% of educational institutions had updated their academic policies to address AI use as of 2024. This inconsistency creates confusion for students who are unsure whether using AI for a given assignment is allowed or not.

5. Data Privacy and Security Risks

When students and educators use AI platforms, they often share personal information, academic work, and even sensitive research data. Most major AI platforms collect and use this data in some form. Schools need clear AI governance frameworks that address what data can be shared with which tools and under what conditions.

The Real Risks of AI Misuse in Education

The real AI misuse in education is “Academic dishonesty”, which goes beyond getting a better grade on one paper. The consequences are broader and longer-lasting.

- Loss of Learning Skills: When the AI thinks, the student misses the mental “exercise” required to master a subject.

- Reduced Creativity: Relying on generated ideas can stifle a student’s unique voice and perspective.

- Ethical Violations: Submitting AI work as one’s own is a fundamental breach of trust that can lead to expulsion.

- Unfair Academic Advantage: Students using AI to skip the work gain an edge over those who follow the rules, undermining the entire grading system.

A 2024 Wiley survey found that 96% of instructors believe at least some of their students cheated over the past year, up from 72% in 2021. Meanwhile, 53% of students say there is more cheating now than last year.

Benefits of AI in Education When Used Ethically

When used correctly, AI is a powerful ally. Responsible use leads to the benefits of AI in education.

- Personalized Learning: AI tutors adapt to a student’s pace, offering help where they struggle most.

- Immersive Learning: AI-driven simulations allow students to explore history or science in 3D environments.

- Accessibility Support: AI tools provide real-time speech-to-text or translation for students with diverse needs.

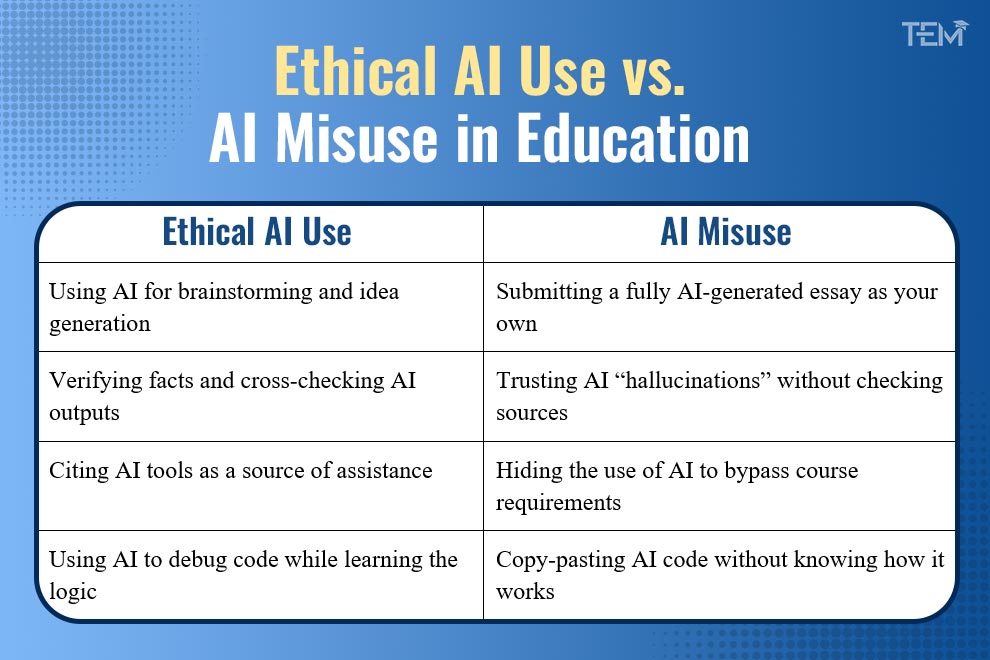

Examples of Ethical AI Use for Students

The mantra for 2026 is: “AI should support learning, not replace it.”

- Concept Explanations: Asking an AI to “explain quantum physics like I’m five” to get a baseline understanding.

- Study Summaries: Using AI to turn long lecture notes into concise bullet points for review.

- Practice Quizzes: Generating mock tests to check for knowledge gaps before an exam.

- Language Improvement: Using ChatGPT and DeepL or grammar checkers to polish original writing.

Best Practices for Students: How to Maintain AI Academic Integrity

Here is a practical guide for students to maintain AI Academic Integrity in 2026:

- Use AI for guidance and understanding, not as a replacement for your own work.

- Always verify AI-generated information against credible sources. AI can and does hallucinate facts.

- Cite AI tools when required by your institution. Transparency matters.

- Never submit fully AI-written work as your own original submission.

- Follow your school’s specific AI policy for each course, since rules vary by instructor and assignment.

- Treat AI like a study partner, not a ghostwriter.

Ethical AI Use vs. AI Misuse in Education

How Schools and Universities Are Responding to AI Academic Integrity

1. AI Detection Tools

68% of schools now use AI detection tools to flag potentially AI-generated content. Turnitin’s AI detection system, integrated into Canvas, Blackboard, and Moodle, is the most widely used.

2. Assessment Redesign

45% of institutions are redesigning assessments to reduce the effectiveness of AI cheating. This includes more oral defenses, process-based assignments, in-class writing, and projects that require personal reflection or local context that AI cannot easily replicate.

3. AI Literacy Programs

Forward-thinking institutions are teaching students how to use AI tools responsibly as part of their curriculum. Rather than treating AI as a threat to be blocked, these programs help students understand AI’s capabilities, limitations, and ethical implications.

The Future of AI Academic Integrity: What to Expect Beyond 2026

The future of AI in higher education centers on a partnership between human intelligence and machine capability. By 2026, the focus has shifted from banning technology to establishing strong AI governance. Educational institutions are moving away from the “arms race” of detection and evasion. Instead of trying to win a battle against better software, schools are building a culture of trust and transparency.

1. Human-AI Collaborative Learning

Human-AI Collaborative Learning is the new standard. This approach teaches students to work alongside AI to amplify their skills rather than replacing them. Graduates must master AI collaboration as a formal skill to succeed in the modern workforce. This ensures that immersive learning experiences help students grow without losing their ability to think critically.

2. AI transparency in assessments

AI transparency in assessments is also becoming a requirement. Much like citing a book, students must now document how they used AI in their work. This shift values the “process” of learning as much as the final result. It creates a clear record of ethical AI use in education and holds students accountable.

Finally, schools are adopting formal AI governance frameworks. These rules protect student data, ensure equal access, and define academic dishonesty with AI without stifling innovation. By using these structured policies, universities uphold AI academic integrity while preparing students for an AI-driven world.

Conclusion

AI academic integrity is a defining challenge for modern education. With 88% of students using AI for assessments and AI-related misconduct rising, the focus must shift from policing to proper guidance. The technology itself is not the enemy; the benefits of AI, such as personalized and immersive learning, are invaluable. The real risk lies in AI misuse, which robs students of critical thinking skills.

Moving forward, institutions must prioritize AI governance and clear policies. By fostering AI literacy, we ensure that technology serves the learning process rather than replacing it. Students and educators must learn to use AI responsibly to build a smarter and more ethical future of education.

FAQs

- What are the risks of using AI in education?

The primary risks of using AI in education include the loss of critical thinking skills and creativity as students become over-reliant on machine-generated output.

- Is using ChatGPT for homework cheating?

Using ChatGPT for homework is not always cheating. It depends on how you use it. Using ChatGPT to explain concepts or help you learn is acceptable, but using it to write your entire answer and submitting it as your own work is considered cheating.

- Can AI-generated content be detected?

AI-generated content can be detected by tools that exist, but they are not 100% accurate. Schools are now focusing more on “learning assurance” and process tracking rather than just detection.

- Why is academic integrity important in 2026?

Academic integrity is important in 2026 to ensure that degrees remain a true reflection of a student’s actual skills and knowledge in an AI-driven world. It preserves the value of education by fostering critical thinking and original thought, preventing technology from replacing essential human learning